On October 27, 2021 Google shared a blog post featuring Pokémon GO and explaining how Pokémon GO’s backend infrastructure works. We found the article to be quite insightful and decided to provide more information from the technical level.

Disclaimer: the author is familiar with most of the cloud based infrastructure and the microservice architecture employed by Niantic. With that being said, it’s always possible to misinterpret something when doing a “black box” analysis. Everything expressed in this article is written in good faith with limited knowledge of the infrastructure.

You can watch the video accompanying the article here, but I do recommend you read up as well to get a better understanding of all the parts of the system:

High level overview of the Pokémon GO backend architecture

- Pokémon GO’s backend stack is not built from a single server or many servers. It’s a collection of microservices living in the cloud.

- Microservices are small, self contained, services that perform a specialized task. In modern software development, it’s common to develop a microservice for each business need

- Microservices are the core mechanism for scaling most modern software, as they allow developers to increase resource allocation where it counts. Spinning up more microservice instances is the modern equivalent of “throw more servers onto it”

- In times of need, devs don’t have to spin up a ton of expensive servers, they can just spin up services for features that are experiencing increased load (for example Trainer Battles)

All microservices live in the Google Kubernetes Engine (GKE). GKE is the “magic fabric” that allows developers to start with a single cluster with a single node and scale up to 15,000 nodes with no effort at all.

Kubernetes is the de facto standard for hosting microservices and microservice clusters in the modern era. A cluster is just a collection of services, like a bouquet of software products.

Niantic’s James Prompanya shared this: “We also have thousands of Kubernetes nodes running specifically for Pokémon GO, plus the GKE nodes running the various microservices that help augment the game experience. All of them work together to support millions of players playing all across the world at a given moment. And unlike other massively multiplayer online games, all of our players share a single “realm”, so they can always interact with one another and share the same game state.”

These are massive numbers, but let’s see how the data flows during a typical game play session.

From catching a Pokémon to cloud based spatial indexes

When you catch a Pokémon in GO, a handful of things happen:

- Your device makes a request to the Google Cloud backend where GO’s services are hosted

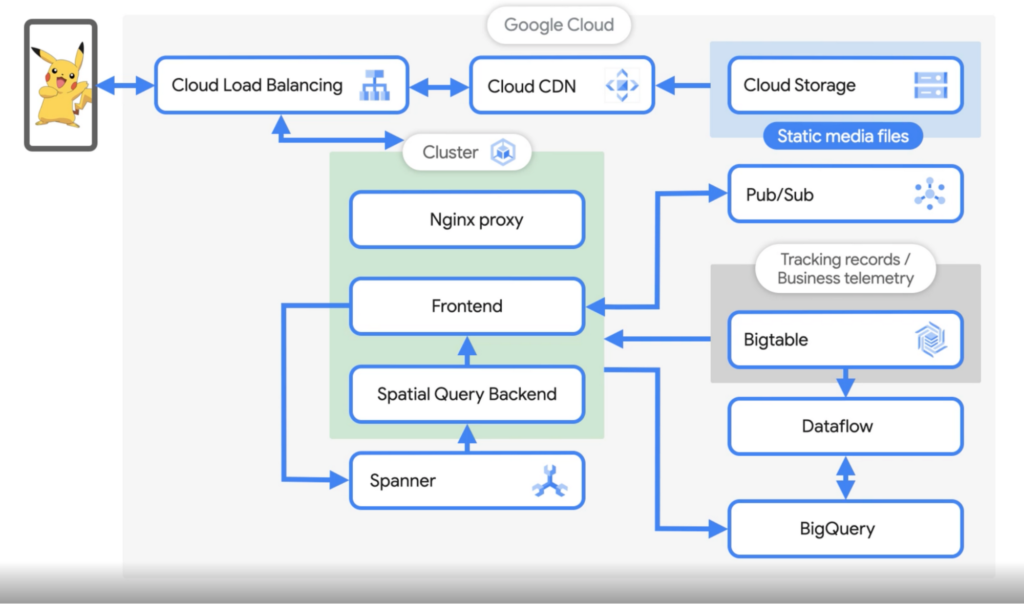

- This request hits the loadbalancer which directs traffic to clusters which are available at the moment

- The cluster picks up the request and handles it through something called NGINX. This is not the actual service that will process your request, but something akin to a traffic police officer.

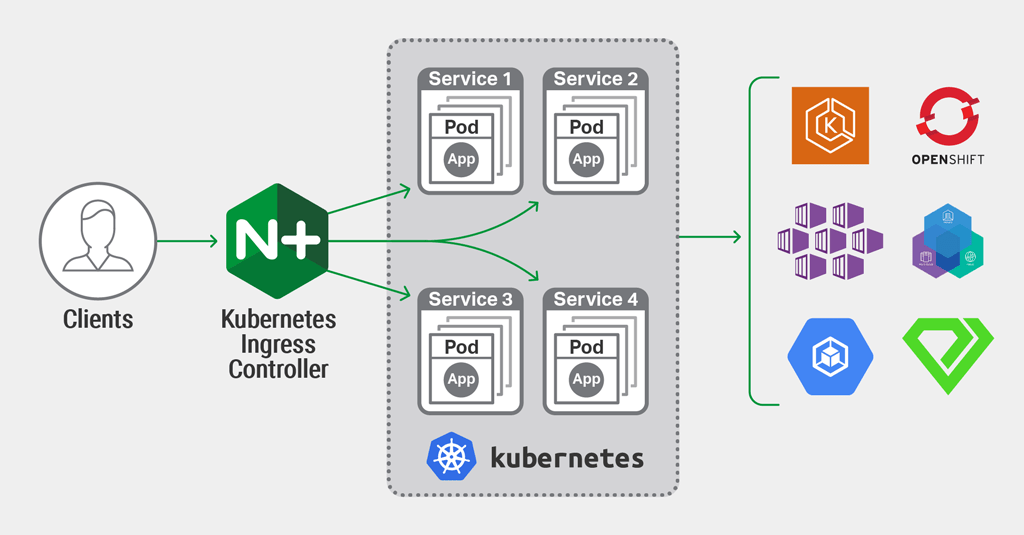

NGINX is something we call a reverse-proxy – it can take in a ton of requests and forward them to a thousand different services to handle them, depending on the type of request and if the services are alive.

The picture above accurately shows how a typical NGINX + kubernetes + third party services architecture works, but in Niantic’s case, there’s thousands of these. One of the most interesting services in each Pokémon GO cluster is the Spatial Query Backend service.

James shared this:

The third pod in the cluster is the Spatial Query Backend. This service keeps a cache that is sharded by location. This cache and service then decides which Pokémon is shown on the map, what gyms and PokéStops are around you, the time zone you’re in, and basically any other feature that is location based. The way I like to think about it is the frontend manages the player and their interaction with the game, while the spatial query backend handles the map. The front end retrieves information from spatial query backend jobs to send back to the user.

So these services – NGINX as a router, frontend as player interaction and Spatial Query backend as the map – handle almost everything that goes on in the game. Of course, interaction like Raids and Trainer Battles likely has it’s own specialised clusters which were not described in this flow, but you get the picture.

After the clusters handle your request, the data gets written into Spanner. Spanner is nuts, frankly speaking. Spanner is the modern, cloud based, take on databases. Think local databases, but completely distributed, replicated and sharded,

I really don’t want to go into this topic too much, but just remember this – Spanner is a big, fat, multi-shard database in the cloud and Niantic is using it.

From Spanner to analysis pipeline

And you thought Niantic doesn’t care about player feedback? Heh.

Every player action that is performed on the clients, is stored, processed and eventually written in another type of storage called Bigtable. Bigtable is Google’s database product that allows analytical and operational workloads, while hiding away the storage complexity from developers.

It’s essentially a huge scratchpad for Niantic. How huge? 5-10TB of data per day according to James:

Priyanka: A massive amount of data must be generated during the game. How does the data analytics pipeline work and what are you analyzing?

James: You are correct, 5-10TB of data per day gets generated and we store all of it in BigQuery and BigTable. These game events are of interest to our data science team to analyze player behavior, verify features like making sure the distribution of pokemon matches what we expect for a given event, marketing reports, etc.

And what about cheating?

Interestingly, this blog post gives us an interesting look at how Niantic does cheat detection:

- As all player actions are recorded, Niantic has the ability to do a streaming logs analysis

- Streaming is the process of analysing data as it comes in, rather than in big, scheduled, chunks.

- Player’s actions are the key signal to detecting cheating – and combining that with log retention policy – makes it clear that Niantic is trying their best to detect cheating as fast as possible, rather than going through historical records

Of course, James did not disclose any rules they use for cheat detection, but at least we know it’s in place and working.

Parting words

Niantic gets a ton of flack for “flaky servers”, “buggy game” and “OMG lag”. We’re not here to defend that, but I do want to call out for some clarification and fact checking. Please use the following terminology when complaining on Twitter next time:

- “Potato microservice”

- “Interns are running the clusters again”

- “Kubernetes is sinking, and so is the Halloween Cup”

- “Niantic, please fix your cloud”

- “My dorm is a better cluster than this game”

Thanks for reading, and I hope this was somewhat informational!